The native AI workspace for macOS

One native app for every AI model. Interactive maps, charts, sortable tables, threaded conversations, and agentic tools — not just another chat wrapper.

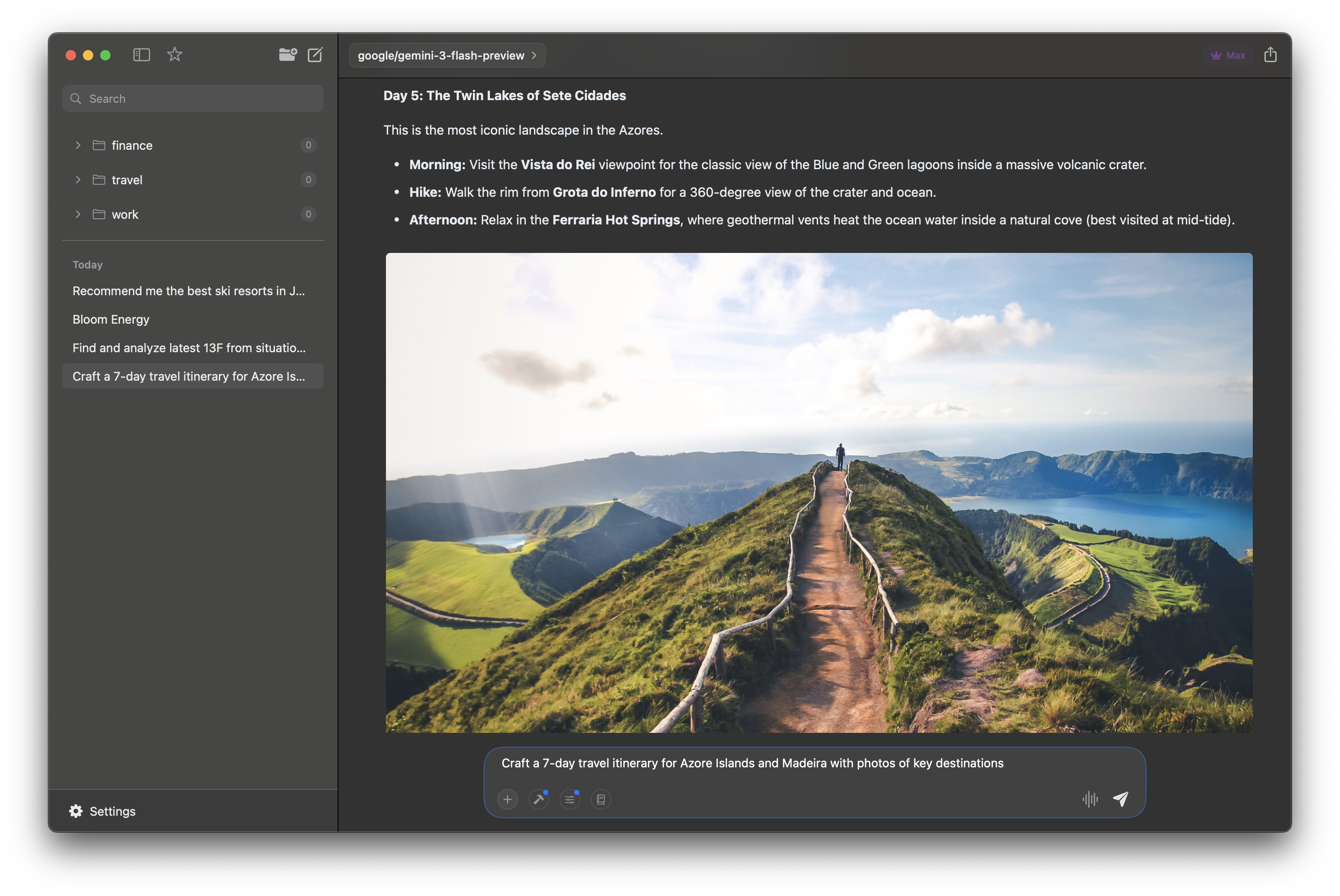

Rich Content & Embedded Media

AI-generated itineraries with inline photos and formatted details

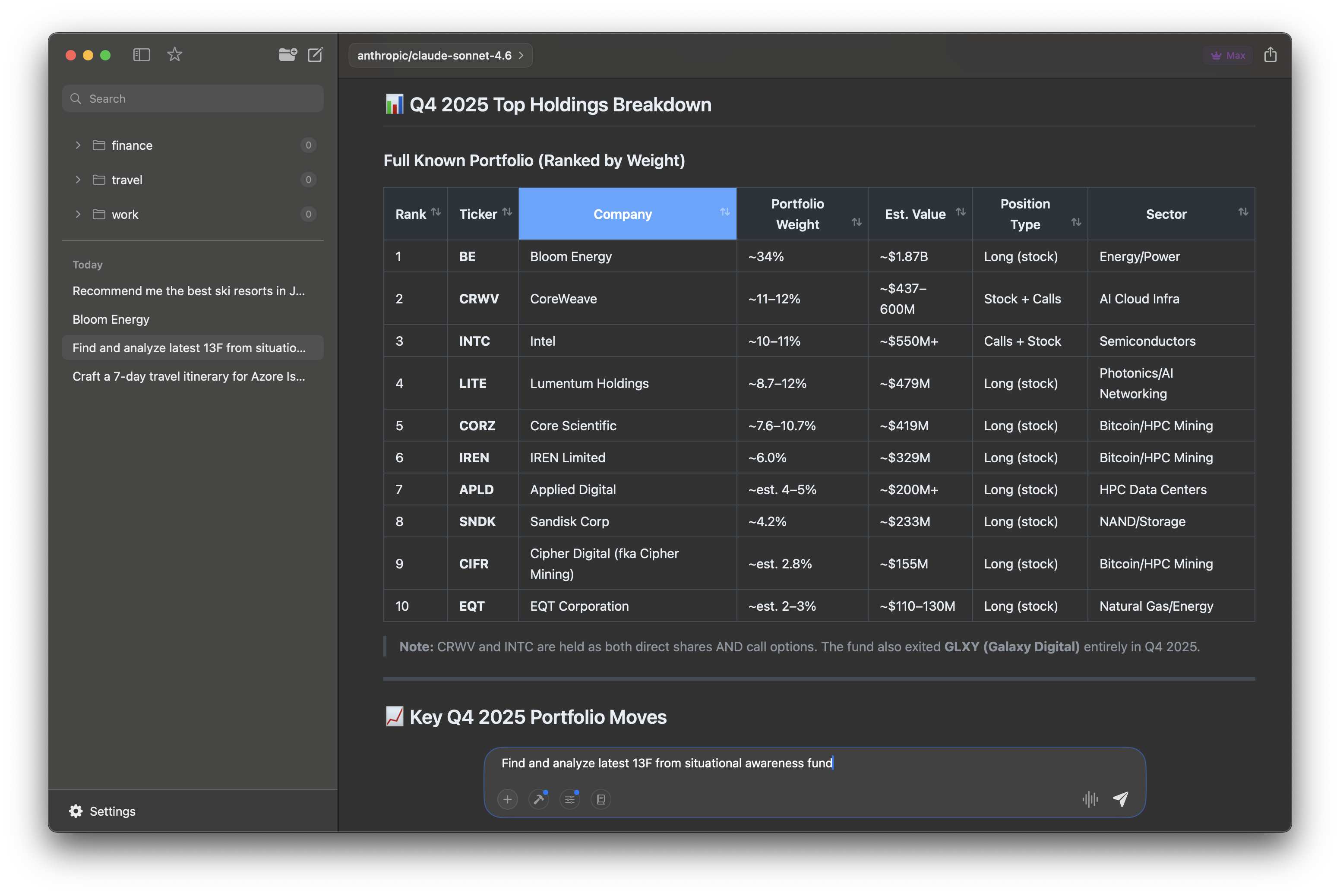

Dynamic Tables

Sortable, structured data rendered right in your conversation

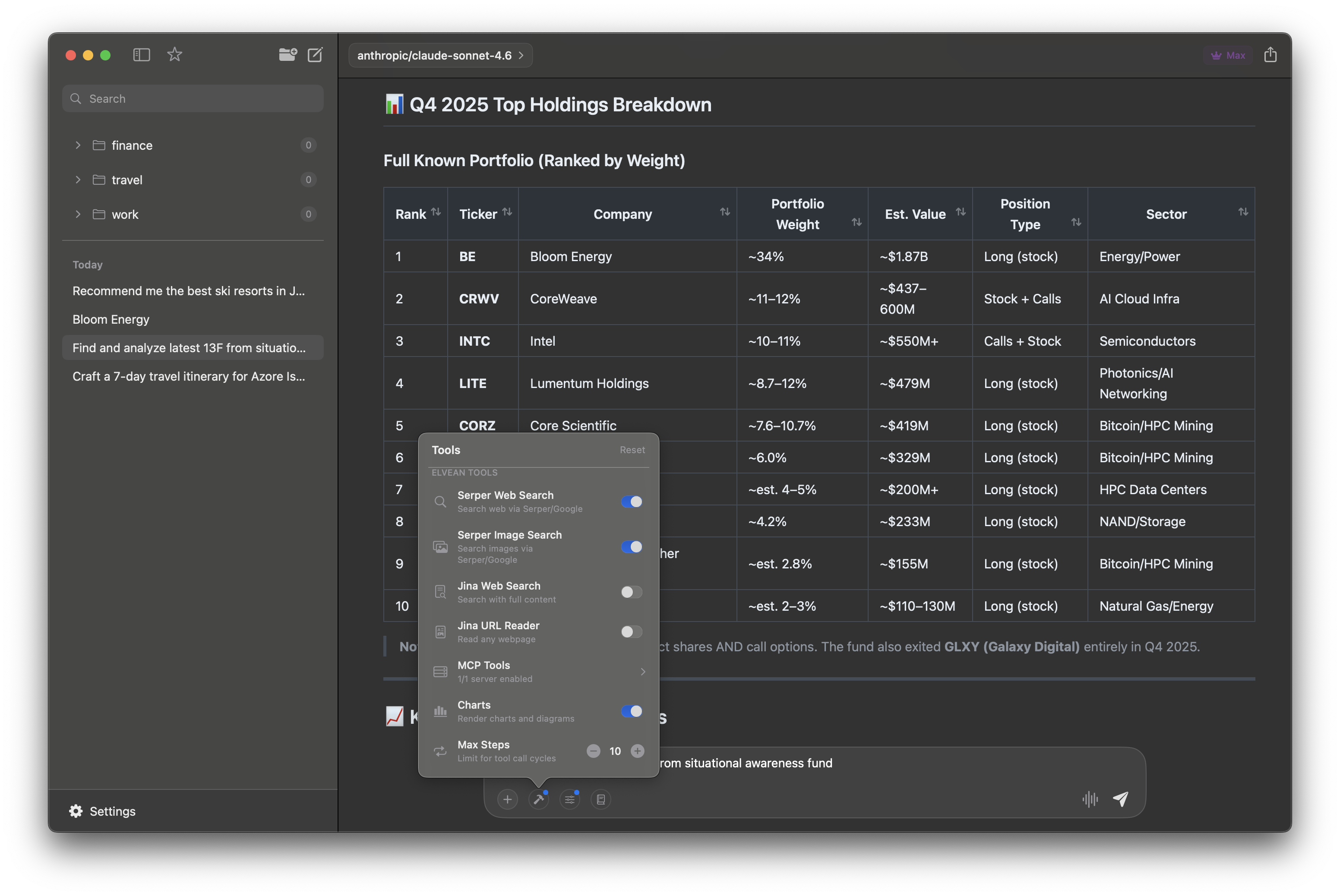

Powerful Built-in Tools

Web search, image search, MCP servers, and charts — all one click away

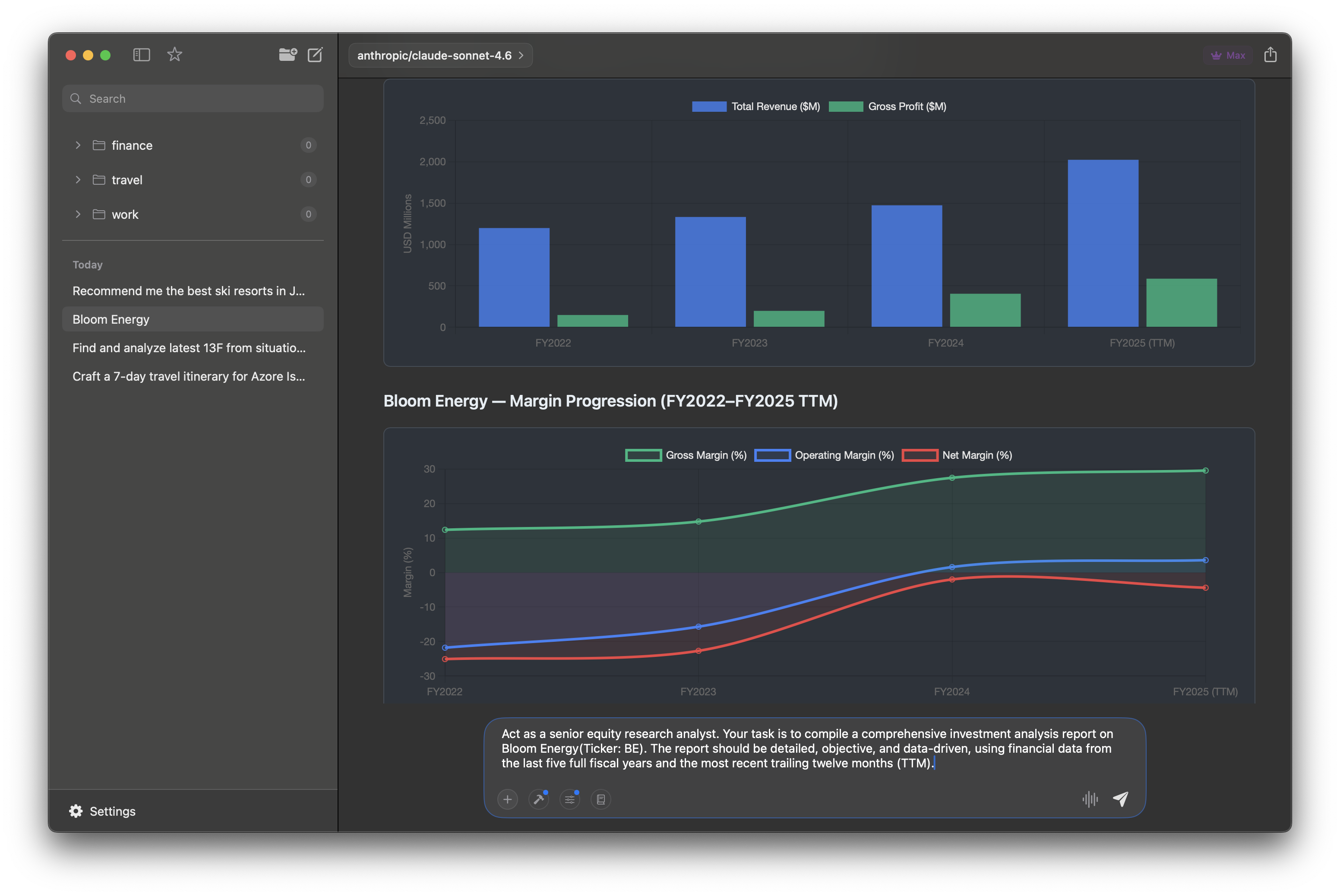

Interactive Charts & Diagrams

Visualize financial data with Chart.js and TradingView charts

Threaded Conversations

Branch into side threads for deeper analysis without losing context

Agentic Research

Multi-step tool calling that fetches, analyzes, and presents findings

Gallery Layout

Photo-rich responses with image grids fetched and arranged automatically

Native Maps

Interactive Apple Maps embedded directly in conversations with pinned locations

Features

More than a chat window

Charts, tables, threads, agentic tools, and system-wide access — built natively for macOS.

Rich UI, Not Just Chat

Interactive charts, TradingView financials, sortable tables, image galleries, and inline Markdown editing — responses that go far beyond plain text.

Agentic Tools & MCP

Multi-step tool calling, built-in web search, and one-click MCP server connections. Your AI researches, analyzes, and presents findings autonomously.

Threads & @Mentions

Slack-like threaded conversations keep context organized. Type @claude or @gpt mid-chat to get a one-off response from a different model.

System-Wide AI

Floating prompt panel lets you run AI completions on selected text in any app — without switching windows. Plus global hotkeys and menu bar access.

Fork & Branch Chats

Branch sub-conversations from any message to explore different directions without losing your original thread.

Prompt Library

Save your best prompts with {{variable}} placeholders, organize by category, and reuse them instantly with Cmd+/.

Every Model, One App

Ollama, Claude, OpenAI, Gemini, Grok, Groq, OpenRouter, or any OpenAI-compatible API — switch instantly without leaving the app.

Apple Silicon Optimized

Native SwiftUI built for macOS. Hardware-accelerated inference via Metal for blazing-fast local model performance.

Private by Default

Run models locally with Ollama — no telemetry, no data collection. API keys stored in the macOS Keychain. Your data stays yours.

What's New

Latest updates

Version 1.2.5

Improved onboarding flow and UI layout fixes.

Version 1.2.3 (Public Beta)

Native maps integration, videos and photos web search, interactive charts and tables.

Version 1.0 — Hello, World (Private Beta)

The first public beta release of Elvean. Local AI inference, prompt templates, and a beautiful native macOS experience.

Get Started

Ready to try Elvean?

Private AI that just works. Download, open, chat — no account required.